Why AI feels hard for Java developers (And Why It Doesn't Have to Be)

If you are a Java developer spent the last decade working in an enterprise building Spring Boot micro services, SQL queries, wrestling with Kubernetes YAMLs, skimming through logs in ELK and reading through Stack Overflow, the recent AI boom probably feels… uncomfortable.

You read blog posts that jump straight into Python notebooks.

You see demos that work once and then magically fail.

You hear words like agents, hallucinations, embeddings, vectors, temperature and none of them fit right into how you normally build Spring Boot applications.

You see X (ex-twitter) is flooded with posts of new Python libraries popping up every day for AI.

It feels like “deja vu” from early days of Javascript frameworks for frontend development

For a very longtime, AI/ML world looked like bunch of Python scripts, Jupyter notebooks, workflow orchestrator and many research papers, but things have turned around since the release of research paper “Attention Is All You Need“, published by Google Brain. The paper introduced the Transformer Architecture, which is the foundation of many AI models, including ChatGPT, Claude and Gemini.

So, coming back to developer persona, let’s start with an honest statement:

AI feels hard for Java developers because it breaks many assumptions we have learned over years of building reliable software. It lacks structure, type safety and predictable platform(JVM) we rely on.

But here is the good news:

The landscape is shifting, AI projects are moving out of research and prototype phase into real production environment and production is where Java lives. Enterprise AI actually needs Java style thinking and stable platform more than ever.

This post explains why AI feels hard, what is actually different, and where Java fits perfectly in this new AI world.

AI hype vs reality for enterprise Java teams

In the social media, AI is often portrayed as a “magic brain”, but in the enterprise, this is actually another downstream dependency, which has to be contained in a constrained environment as like other software components.

There are lot of Hyped statements circulating around, you might have heard “This is end of software engineering”, “we don’t need architecture anymore”, “Prompt engineering is all you need”, “you can just drag and drop and everything works” and most importantly, “you need a PhD in Mathematics to build an AI application”.

The Reality is, most AI work in Enterprises involves Orchestration, it is about connecting your existing business data to a Large Language Model (LLM) via an API. This is where “Security”, “Costs”, “Compliance”, “Monitoring”, “Audit-ability” matters the most. So LLMs are not magical brains, but they are very good text generators, that must be used carefully.

So as a Java developer, this means that you don’t need to create a LLM model from scratch, but you need to do what you were already doing, which is to build secure, scalable systems, manage data pipelines, monitoring and make sure the system survives when a million users use the system at once.

How LLM apps differ from traditional Spring Boot apps

From the conversations that I had with my fellow Java developers, what I have observed is that the biggest hurdle for Java developers isn’t the code, but it’s the mental model. You have to rewire your brain the way you think about developing LLM apps. This is another transformation, similar to the way you rewired your brain from thinking in an SQL model to thinking in a NoSQL data model.

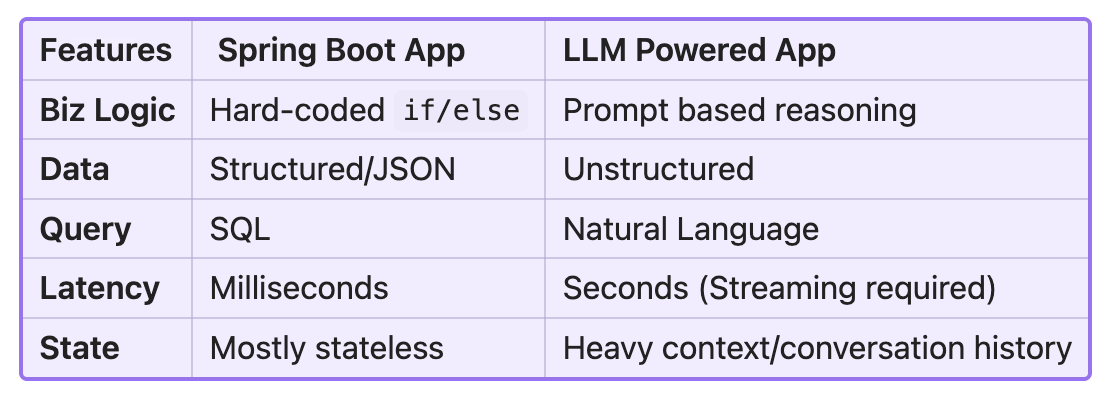

Let’s compare a traditional Spring Boot app with an LLM-based app,

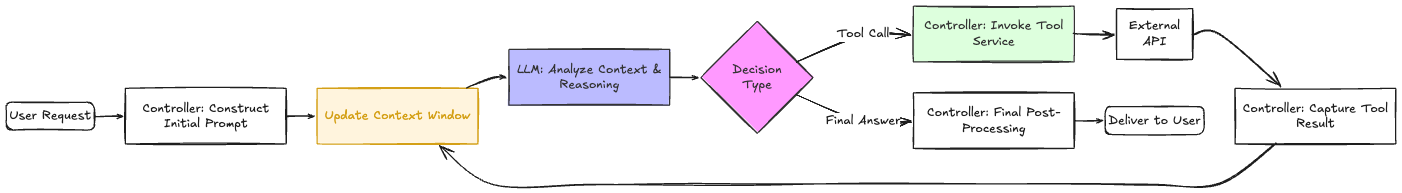

In a traditional Spring Boot App, the request flow is linear,

The key characteristics of this flow is, For the same input you get the same output, Business logic is deterministic, Bugs can be reproduced and Tests are straightforward.

where as in a AI based LLM apps, the request flow is often iterative and stateful, which is called as “Agentic loop”, Instead of single db query, your application might ask LLM a question, receive a request for more data, fetch that data and send it back to LLM.

The key characteristics of this flow is, For the same input you get different output, output depends on probabilities, Bugs are always not reproducible and the tests are not straightforward.

A table comparing traditional enterprise app features to new generational LLM powered apps,

Determinism vs Probabilistic systems

As Java developers, we love determinism. Java applications are built in a way that if you pass 1 and 1 as parameters to a method, which implements add functionality, you expect 2, every time, either this method works or throws an exception. The logic paths in the implementation are known and they are hard coded, which is easy to debug and maintain.

On the other hand, LLM models are Probabilistic systems, which predicts likely next text (token) and generates it. If you ask the same question second time, you might get two different answers. For us, this system feels like a “flaky” one. However this can be controlled to a certain extent using “Guardrails”. So we, developers should prepare ourselves to write code that expects uncertainty.

Where Java shines in AI systems?

Python is great for building LLMs from scratch and for data science. But there’s a common misconception that Python is the only or “natural” language for building AI apps. In reality, you can build AI applications in Java just fine, and you also get the benefits of a mature, well-tuned JVM platform. Here are few key things that Java shines,

Strong Type System:

LLM output is messy text. Java’s type system helps turn that into well defined domain objects, so your business logic can rely on it instead of guessing at strings.Virtual Threads (Project Loom):

AI calls to LLM are slow. In the past, this would block threads and kill throughput. With virtual threads, a single JVM can handle millions of concurrent AI conversations without breaking a sweat.Project Panama:

Panama lets the JVM talk directly to high-performance native libraries and AI hardware (GPUs) at near C++ speed, closing the gap between Java and low-level AI compute. Check talk by compiler architect Maurizio Project Panama: Say Goodbye to JNIProject Babylon:

Project Babylon enables developers to build and run AI models, including LLMs, image classifiers, and object detection algorithms, directly in Java. Check the fascinating talk and demo by Ana Maria and Lize Raes Writing GPU-Ready AI Models in Pure Java with BabylonObservability:

The Java ecosystem has mature tools like Micrometer, OpenTelemetry, and Grafana to track latency, cost, failures, and even AI hallucinations in production.

Python still dominates AI research, but Java is rapidly becoming a strong choice for building and running AI in production for Enterprises. Here are the list of frameworks, libraries and

Spring AI

Spring AI brings LLMs and AI services into the Spring ecosystem using familiar Spring patterns. It makes it easy to wire prompts, models, embeddings, and tools into production-grade Java apps.LangChain4j

LangChain4j is the Java version of LangChain for building LLM-powered applications. It provides abstractions for prompts, tools, memory, and agents without hiding what’s really happening.LangGraph4j

LangGraph4j is the Java version of LangGraph and lets you build stateful, multi-step AI workflows using graph-based execution. It’s useful for complex agent flows where control, retries, branching matter and moreover it provides integration for Spring AI and LangChain4j.Jlama

Jlama is a pure Java LLM inference engine focused on running models efficiently on the JVM. It allows you to run and experiment with LLMs locally without Python or native bindings.JVector

JVector is a Java vector search library optimized for embeddings and similarity search. It integrates well with JVM apps where you want fast, in process retrieval without calling any external services. JVector is integrated into Apache Cassandra 5.0 and OpenSearch as the core engine for its native vector search capabilities.Embabel

Embabel is a Java framework for building agent based AI systems with planning step and rich domain model in your application. It focuses on making AI behavior predictable, testable, and suitable for enterprise use. It embraces GOAP (Goal Oriented Action Planning), a popular AI planning algorithm used in gaming.

The Java AI ecosystem is maturing fast, with frameworks like Spring AI, LangChain4j, and LangGraph4j making it easy to build LLM-powered applications and agent workflows using familiar Java patterns. Projects like Jlama and JVector bring model inference and vector search directly onto the JVM, while Embabel focuses on structured, Agentic Workflows systems suitable for enterprise production.

In the next post, I will cover the key terminologies and jargon of LLMs to help you better understand how they work.